ImportError: Using `load_in_8bit=True` requires Accelerate: `pip install accelerate` and the latest

ImportError: Using `load_in_8bit=True` requires Accelerate: `pip install accelerate` and the latest

·

报错信息

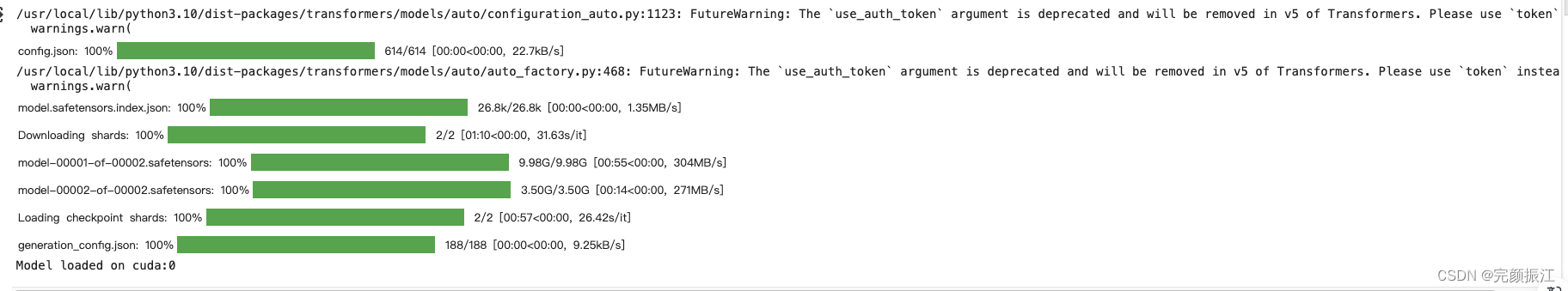

/usr/local/lib/python3.10/dist-packages/transformers/models/auto/configuration_auto.py:1006: FutureWarning: The `use_auth_token` argument is deprecated and will be removed in v5 of Transformers.

warnings.warn(

/usr/local/lib/python3.10/dist-packages/transformers/models/auto/auto_factory.py:479: FutureWarning: The `use_auth_token` argument is deprecated and will be removed in v5 of Transformers.

warnings.warn(

Traceback (most recent call last):

File "/colab/./1.py", line 26, in <module>

model = transformers.AutoModelForCausalLM.from_pretrained(

File "/usr/local/lib/python3.10/dist-packages/transformers/models/auto/auto_factory.py", line 563, in from_pretrained

return model_class.from_pretrained(

File "/usr/local/lib/python3.10/dist-packages/transformers/modeling_utils.py", line 2487, in from_pretrained

raise ImportError(

ImportError: Using `load_in_8bit=True` requires Accelerate: `pip install accelerate` and the latest version of bitsandbytes `pip install -i https://test.pypi.org/simple/ bitsandbytes` or pip install bitsandbytes`最终执行如下命令解决

pip3 uninstall transformers --yes

pip install transformers==4.30.0再次执行就报错了

from torch import cuda, bfloat16

import transformers

model_id = 'meta-llama/Llama-2-7b-chat-hf'

device = f'cuda:{cuda.current_device()}' if cuda.is_available() else 'cpu'

# set quantization configuration to load large model with less GPU memory

# this requires the `bitsandbytes` library

bnb_config = transformers.BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_quant_type='nf4',

bnb_4bit_use_double_quant=True,

bnb_4bit_compute_dtype=bfloat16

)

# begin initializing HF items, you need an access token

hf_auth = 'xxxxxxxxx-dddd---sdfsdfsdff'

model_config = transformers.AutoConfig.from_pretrained(

model_id,

use_auth_token=hf_auth

)

model = transformers.AutoModelForCausalLM.from_pretrained(

model_id,

trust_remote_code=True,

config=model_config,

quantization_config=bnb_config,

device_map='auto',

use_auth_token=hf_auth

)

# enable evaluation mode to allow model inference

model.eval()

print(f"Model loaded on {device}")执行结果

更多推荐

已为社区贡献6条内容

已为社区贡献6条内容

所有评论(0)